|

For example, I've noticed that if I run my benchmarks with all the usual applications open (multiple Chrome instances with plenty of tabs, Teams and other messenger apps, etc.), they all take a bit longer than when I close basically all the apps on my computer. One of the issues with running benchmarks with python -m timeit is that sometimes other processes on your computer might affect the Python process and randomly slow it down. If you think executing different code snippets a different number of times affects your benchmarks, you can set this parameter to a predefined number. A slow function will be executed once, but a very fast one will run thousands of times. until the total execution time exceeds 0.2 seconds. By default, if you don't specify the '-n' (or -number) parameter, timeit will try to run your code 1, 2, 5, 10, 20. We can be a bit more strict and decide to execute our code the same number of times each time. python -m timeit -s "setup code" -n 10000 # So we can improve the above code and run it like this: python -m timeit -s "import numpy" "numpy.arange(10)". Whatever code you pass here will be executed but won't be part of the benchmarks. To separate the setup code from the benchmarks, timeit supports -s parameter. You want to see how long it takes to execute some functions from that module. But you probably don't want to benchmark how long it takes to import modules. The import will take most of the time during the benchmark. If we benchmark those two lines of code: import numpy Let's say you have an import statement that takes a relatively long time to import compared to executing a function from that module. However, python -m timeit approach has a major drawback - it doesn't separate the setup code from the code you want to benchmark. For example, to see how long it takes to sum up the first 1,000,000 numbers, we can run this code: python -m timeit "sum(range(1_000_001))" You can directly write multiple Python statements separated by semicolons, and that will work just fine. I like to put the code I want to benchmark inside a function for more clarity, but you don't have to do this. You can write python -m timeit your_code(), and Python will print out how long it took to run whatever your_code() does.

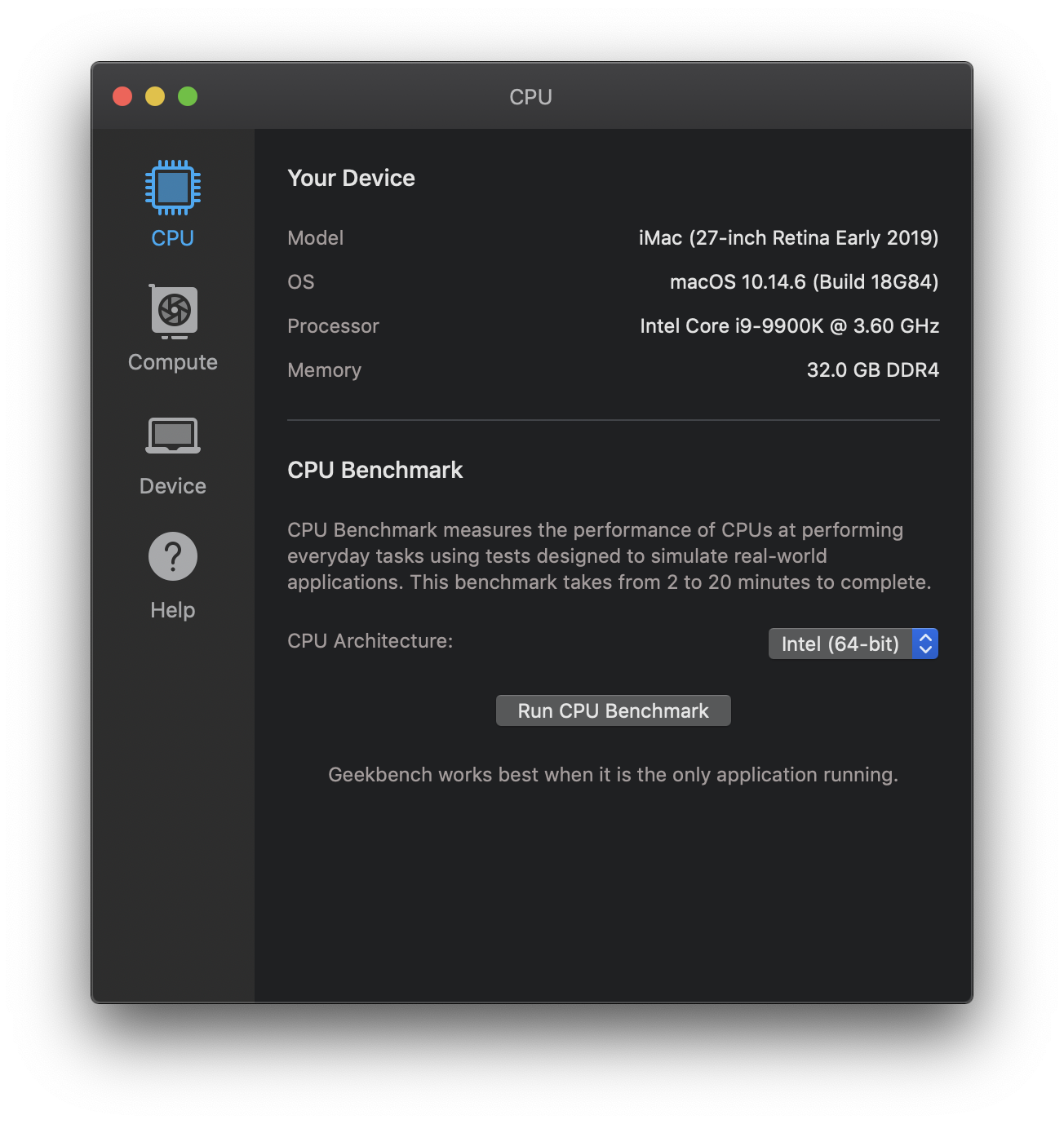

The easiest way to measure how long it takes to run some code is to use the timeit module. Plus, I will give you some rules of thumb for when each tool might be handy. At the end of the article, I will tell you which one I chose and why. So here are a couple of different tools and techniques I tried. But maybe it's too simple, and I owe my readers some way of benchmarking that won't be interfered by sudden CPU spikes on my computer? I could run python -m timeit, which is probably the simplest way of measuring how long it takes to execute some code.

While preparing to write the Writing Faster Python series, the first problem I faced was "How do I benchmark a piece of code in an objective yet uncomplicated way".

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed